The Stanford AI Index Just Confirmed What Hiring Teams Are Feeling. Here Is What to Do About It

Stanford's 2026 AI Index landed this week with findings that every hiring leader needs to understand. AI is reshaping who gets hired, how fast, and what assessment actually means in 2026.

Stanford University's Institute for Human-Centered AI released its 2026 AI Index on April 13. At over 400 pages, it is the closest thing the field has to a neutral annual audit - tracking model performance, investment flows, hiring data, scientific output, and documented AI incidents from dozens of external sources.

Most of the coverage this week focused on the US-China AI performance gap narrowing to 2.7 percentage points, down from leads of 17 to 31 percentage points in late 2023. That is a legitimate story.

But for enterprise hiring teams, the more important findings are buried deeper in the report. They confirm something that HR leaders in technology, financial services, and GCCs have been sensing but struggling to quantify: AI is fundamentally changing who is in your candidate pool, what their CVs mean, and how much your current assessment process can actually tell you about their capability.

Here are the findings that matter most for hiring in 2026 and what they mean in practice.

Finding 1: Entry-Level Employment Is Already Falling and It Is Going to Accelerate

Employment among software developers aged 22 to 25 has plummeted nearly 20% since 2024, even as their older colleagues' headcount grows. The pattern repeats in other jobs with higher levels of AI exposure, like customer service. Meanwhile, firm surveys indicate executives expect this trend to accelerate, with planned headcount reductions outpacing recent cuts. Stanford HAI

For hiring teams this has two immediate implications that pull in opposite directions.

The first is that junior roles are becoming harder to justify internally, which means the candidates applying for mid-level roles are doing so without the two to three years of foundational experience those roles previously assumed. The talent pipeline is thinning at the base and the gap is showing up mid-funnel.

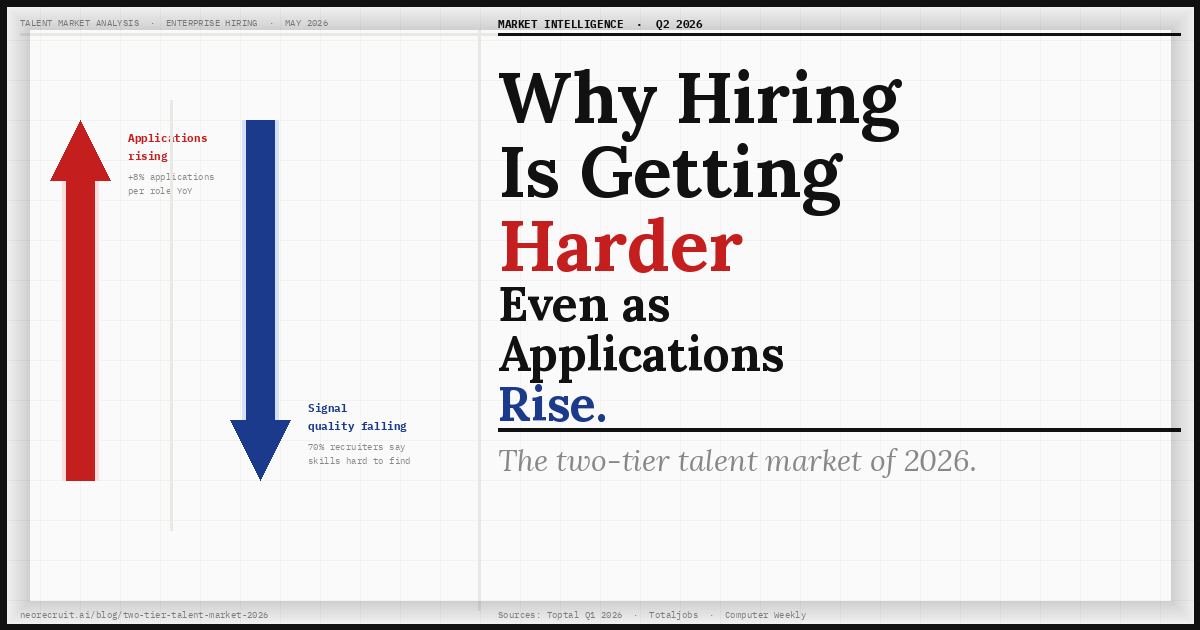

The second is that the candidates who are applying for those mid-level roles are doing so in a more competitive market than at any point in the last decade. More applicants per role. More polished CVs. More sophisticated interview preparation. And more AI assistance at every stage of that preparation.

The Stanford data confirms the shift has already happened. It is not a prediction. The labor market for young tech workers has changed rapidly. Employment for software developers aged 22 to 25 fell by nearly 20 percent. Emp0 The hiring pipeline that enterprise HR teams built their processes around no longer looks the same.

Finding 2: AI Adoption Is Faster Than Any Previous Technology But the Public Does Not Trust It

Generative AI reached 53% global population adoption within three years, faster than the personal computer or the internet. The estimated value of generative AI tools to US consumers reached $172 billion annually by early 2026, with the median value per user tripling between 2025 and 2026. Stanford HAI

This is the adoption context that makes the cheating problem in hiring structural rather than marginal. When a technology reaches 53% global adoption in three years, the assumption that candidates in your pipeline are not using it is not a policy position. It is a fantasy.

Only 33% of Americans expect AI to make their jobs better, compared to a global average of 40%. Humai Candidates using AI to pass interviews are not primarily motivated by malice. Many are motivated by anxiety. The technology is ubiquitous, the job market is tighter than it has been in years, and the tools to assist with interviews are cheap, accessible, and as we documented in our earlier analysis, specifically engineered to be undetectable by standard proctoring software.

The adoption speed Stanford documents is the same adoption speed that explains why CodeSignal found cheating on technical assessments doubled from 16% to 35% in a single year. These trends are not unrelated. They are the same trend viewed from different angles.

Finding 3: AI Excels at Reasoning Tasks But Still Fails at Judgment

Despite predictions that AI development may hit a wall, the top models just keep getting better. By some measures, they now meet or exceed the performance of human experts on tests that aim to measure PhD-level science, math, and language understanding. SWE-bench Verified, a software engineering benchmark, saw top scores jump from around 60% in 2024 to almost 100% in 2025. MIT Technology Review

This is the finding that most directly challenges what standard technical assessments are actually measuring. If AI can solve PhD-level problems and score near-perfect on software engineering benchmarks, then any assessment that tests whether a candidate can answer questions that AI can also answer is not testing the candidate. It is testing their access to AI tools.

However, AI still struggles in plenty of other areas. Because the models learn by processing enormous amounts of text and images rather than by experiencing the physical world, AI exhibits jagged intelligence. MIT Technology Review It can solve a complex quantum mechanics problem but struggles with spatial reasoning. It can write production-quality code but cannot reliably answer questions that require genuine situational judgment - the specific tradeoff you made at 2am on a deadline, the reason you chose this architecture over that one given the constraints you actually faced.

This is precisely why adaptive conversational interviews produce fundamentally different signal from standard assessments. A fixed question asking a candidate to explain their approach to system design can be answered by any capable AI tool. A follow-up question asking about the specific decision they described three sentences ago - why they chose that database schema given the latency constraint they just mentioned - requires the candidate to actually have been there. AI can generate an answer but the latency and coherence of doing so in real conversation is detectable in ways that a fixed assessment never reveals.

The Stanford finding about jagged intelligence is not just a curiosity about model limitations. It is a map of where human assessment still matters most and what kinds of questions actually differentiate genuine capability from AI-assisted performance.

Finding 4: The Productivity Gains Are Real But Concentrated

A small group of companies is pulling sharply ahead in the race to generate real financial returns from artificial intelligence. Nearly three quarters of AI's economic value is captured by just one fifth of organisations, revealing a stark and widening divide between a small group of AI leaders and the majority of businesses still stuck in pilot mode. PwC

PwC's 2026 AI Performance Study, released the same week as the Stanford Index, adds important context. The organisations capturing AI's value are not those that have deployed the most tools. They are those that have used AI to redesign their processes rather than automate existing ones.

This distinction matters for hiring specifically. An organisation that has used AI to make its ATS faster is automating an existing process. An organisation that has used AI to change what its interview actually measures - shifting from scripted competency questions to adaptive conversations that probe genuine reasoning - has redesigned the process. The second type of organisation is building a structurally different quality of team.

The PwC finding about concentration of value is a forward indicator. The hiring quality gap between organisations that have redesigned their assessment and those that have not will become visible in team performance data within 18 to 24 months. The organisations that are still relying on CVs and unstructured interviews to evaluate candidates who have AI assistance in their back pocket are systematically selecting for performance in an AI-assisted environment, not for genuine capability.

Finding 5: The Expert-Public Perception Gap Is Widening

Only 10% of Americans say they are more excited than concerned about AI in daily life. Among AI experts, that number is 56%. On jobs, the split is 73% versus 23%. The Neuron

For enterprise hiring teams, this perception gap has a practical consequence that is easy to overlook: candidates are anxious. The same technology that HR teams are deploying to screen faster and assess more candidates is the technology that candidates believe is going to make their jobs harder to find and less stable once found.

This matters for candidate experience in a market where employer brand and process quality directly affect offer acceptance rates. A hiring process that feels like a black box - AI screening with no explanation, assessments with no feedback, automated rejections with no rationale - feeds the public anxiety the Stanford data documents. A process that is transparent about what is being evaluated and why, that gives candidates a genuine opportunity to demonstrate their actual thinking rather than their ability to rehearse for a fixed question set, is meaningfully different in the eyes of someone who already distrusts the technology involved.

The Stanford finding is not a reason to avoid AI in hiring. It is a reason to use it in a way that produces explainable, defensible outcomes rather than opaque verdicts.

What the Stanford Index Means for Enterprise Hiring in Practice

Taken together, the 2026 Stanford AI Index describes a hiring environment where the candidate pool has been reshaped by AI adoption, where standard assessment methods are measuring the wrong thing, where public anxiety about the technology is structurally high, and where the organisations extracting real value from AI are those that have redesigned their processes rather than automated their existing ones.

For enterprise HR and TA leaders, the operational implications are specific.

The junior talent pipeline you have relied on for the last decade looks different. The candidates entering your mid-level roles increasingly do not have the foundational experience those roles assumed. Your assessment needs to test for the reasoning and judgment capability those candidates actually have, not for the educational credentials or years of experience they no longer uniformly carry.

Standard technical assessments are no longer reliable signals. When AI can score near-perfect on software engineering benchmarks, a benchmark-style assessment in your hiring process is producing noise rather than signal. The question is not whether to assess technical skill but how to assess it in a way that AI cannot fully substitute for.

The public anxiety around AI in hiring is a candidate experience problem. Transparent, explainable assessment outcomes matter more now than they did two years ago. Candidates who do not understand why they were screened out will assume the AI got it wrong. Candidates who go through an assessment process that feels fair and substantive - where they were genuinely listened to rather than asked to perform for a camera - are significantly more likely to accept offers and refer others.

NeoRecruit was built for exactly this environment. The adaptive AI avatar conducts interviews that probe genuine reasoning - generating follow-up questions from what each candidate actually said - producing assessment signal that standard tools no longer generate reliably. NeoEye (patent pending) detects AI-assisted fraud in the session layer. Every session produces a structured, auditable assessment with explainable scoring rationale - giving hiring teams the documented evidence they need to make defensible decisions and respond to the transparency expectations the Stanford data documents.

The Stanford AI Index does not tell you which hiring platform to use. It tells you that the hiring environment has changed in ways that make the choice of platform more consequential than it has ever been. The organisations that recognise that shift now are the ones that will be in the 20% capturing most of AI's value rather than the 80% still running pilots.

Book a free pilot at neorecruit.ai

Related reading:

TABLE OF CONTENTS

Smarter Hiring Starts Here

Get all four pillars working for you. Automate the busywork, elevate your hires.