Agentic AI Has Changed How Candidates Cheat. Most Hiring Platforms Have Not Caught Up.

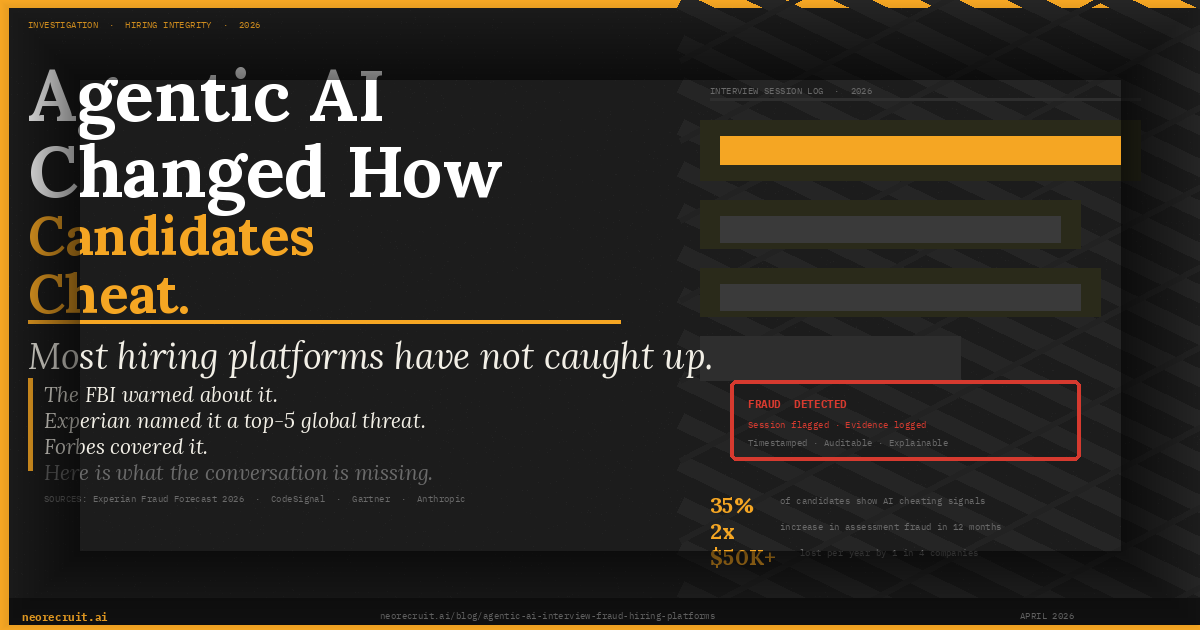

Experian named deepfake hiring a top-5 global fraud threat for 2026. Forbes covered it. The FBI warned about it. Here is what the conversation is missing and what actually works.

In January 2026, Experian released its annual Future of Fraud Forecast. For the first time, deepfake job candidates appeared alongside financial crime and machine-to-machine fraud as a top-five global threat. The report did not frame this as a future risk. It described it as an operational reality that employers were already encountering without knowing it.

The FBI and the Department of Justice had issued multiple warnings in 2025 about documented instances of North Korean operatives using deepfake technology and real-time identity manipulation to gain employment at hundreds of US companies. These were not unsophisticated attacks. They passed video interviews. They received offers. Some were onboarded and given access to internal systems before anyone realised.

In April 2026, Forbes ran a piece on how agentic HR platforms are beginning to fight back. The framing was broadly correct: AI is being used to catch the fraud that AI is enabling. But the conversation is missing something important.

Not all solutions are the same. And the distinction matters more than most hiring teams currently understand.

The Problem Is Structural, Not Technological

When most people think about interview fraud in 2026, they picture a candidate in front of a camera with a second screen running ChatGPT out of frame. That version of the problem is real but it is the least sophisticated form of what is happening.

CodeSignal published data in February 2026 showing that cheating on technical assessments had doubled in a single year, from 16% to 35%. Anthropic publicly acknowledged they had to rewrite their own technical interview questions because candidates were using Claude to generate answers in real time. A Gartner survey found that 39% of candidates reported using AI during job applications. On the platform Blind, one in five US professionals admitted to secretly using AI in interviews.

These are not outliers. They are signals of a structural shift. The tools candidates now use do not look like cheating. They look like preparation. A candidate running a real-time AI copilot during a video interview does not appear distracted. They appear thoughtful. They pause, appear to consider the question, and then deliver an answer that is polished, relevant, and completely hollow.

The standard detection response to this has been to add more monitoring. Browser lockouts. Tab-switching alerts. Eye-tracking software. Screen sharing requirements. These tools were designed for a world where cheating meant opening a second browser tab. They were not designed for a world where the assistance operates at the graphics layer of the candidate's device, invisible to screen capture.

The most sophisticated AI copilot tools in use today cannot be detected by standard proctoring software. This is not speculation. It is the documented design intent of tools like Cluely and similar products, which explicitly advertise their invisibility to screen sharing as a feature. Monitoring a broken process more intensively does not fix the process.

The Difference Between Monitoring and Architecture

Here is the distinction the broader conversation is not making clearly enough. Most hiring platforms, including many that are now adding fraud detection features, operate on the same underlying architecture. A candidate receives a set of questions. The candidate records responses, either live or asynchronously. The platform then analyses those responses and the candidate's behaviour during recording.

Fraud detection in this model is forensic. It examines what happened. It looks for signals that something was wrong with the performance that was already delivered.

This is useful. It is not sufficient. The reason it is not sufficient is that the interview format itself creates the vulnerability. Fixed questions, asked in a predictable sequence, give candidates enough information to prepare AI-assisted responses before the question is even finished. When a candidate knows that a structured behavioural interview will ask variants of tell me about a time you led a team through a difficult situation, the AI copilot has already been running possible answers for the last ninety seconds.

The architectural alternative is to remove the fixed question entirely. An adaptive conversational interview does not have a script. The AI interviewer generates each follow-up question based on the specific content of the previous answer. If a candidate says they led a cross-functional team through a product launch, the next question is not a generic probe about leadership. It is a specific question about that product launch, those constraints, that team.

The candidate cannot pre-load an answer to a question that does not exist until they have already spoken. The AI copilot cannot generate a response to a follow-up it has not seen. And critically, the latency required to prompt an AI tool, read the output, and recite it becomes detectable in the natural rhythm of conversation in a way it never is in a scripted format. This is not an incremental improvement on standard video interviewing. It is a different thing entirely.

What Agentic Fraud Actually Looks Like in 2026

The Experian forecast and the Forbes coverage focused heavily on deepfake identity fraud, which is real and growing. But for most enterprise hiring teams, the more prevalent daily problem is not identity impersonation. It is competence impersonation.

A candidate who genuinely exists, is genuinely on the call, and is genuinely themselves, but who is delivering answers generated by an AI tool in real time. The person who joins your team is not a North Korean operative. They are a real hire who cannot actually do the job they were assessed for.

According to a 2025 survey, 23% of companies reported losing more than $50,000 in a single year due to fraudulent candidates. 10% lost more than $100,000. These figures do not capture the full cost, which includes onboarding investment, missed sprint targets, security exposure from access granted to someone whose assessed capability did not match their actual capability, and the time spent managing a performance problem that began at the interview stage.

The bad hire is the fraud that nobody calls fraud. In technical roles in particular, the stakes are acute. A software engineer who passed a coding assessment with AI assistance and is now writing production code with AI assistance, unsupervised, on a codebase they do not understand, is an active liability. Not because AI-assisted code is inherently bad but because the person managing the AI does not have the judgment to know when it is wrong.

Anthropic's team identified this problem in their own hiring process. When the people building the most advanced AI in the world have to redesign their interview questions because candidates are cheating with that AI, the signal is clear: the old assessment architecture is broken.

What Actually Works

The answer is not more monitoring. It is better signal. Better signal means three things operating together.

Interview design that makes AI assistance structurally difficult. Adaptive conversation that generates follow-up questions from previous answers. Probing for the specific reasoning behind a specific decision the candidate described. Asking about the tradeoffs that were visible at the time, not the polished retrospective. No AI copilot operates fast enough to keep up with a skilled adaptive interviewer asking about the specific details of what you just said.

Multimodal detection for what slips through. Audio pattern analysis. Visual behaviour analysis. Response pattern analysis. Timing and latency signals. Cross-referencing what was said against the internal consistency of the answers across the full conversation. Not any one of these alone, but all of them simultaneously, generating a risk score with timestamped evidence that a human reviewer can examine.

Auditable outputs for every session. Not a pass or fail verdict. A structured record of what was flagged, when, and why. Explainable outputs that a hiring manager can review and override if warranted, that satisfy the human oversight requirements now being introduced in data protection frameworks in the UK, EU, and across the GCC.

NeoRecruit was built around exactly this architecture. The adaptive AI avatar conducts real conversations, not scripted screenings. NeoEye (patent pending) analyses audio, video, behaviour, and response patterns simultaneously across every session. Every flagged moment is timestamped and documented. Hiring teams receive a structured risk score and the evidence behind it, not a black box verdict.

Our clients report 90% reduction in pre-screening time and 5x more candidates evaluated per hiring cycle. Across 12,500+ interviews conducted in India, the UK, the US, the UAE, and Southeast Asia, we have seen the full range of what AI-assisted cheating looks like in practice. We have built the detection architecture from that experience, not from theory.

The Questions HR Leaders Should Be Asking Their Vendors

The Forbes coverage and the Experian forecast will accelerate investment in fraud detection features across the hiring platform market. In the next six months, you will see announcements from every major video interviewing platform about new anti-cheat capabilities.

Most of them will be adding monitoring layers to the same fixed-format interview architecture that created the vulnerability in the first place. Before accepting a vendor's fraud detection claim at face value, ask five questions.

Does your interview format adapt based on what the candidate actually said, or does it follow a predetermined sequence? If the answer is predetermined, the format is scriptable.

What specifically does your detection system analyse? Tab switching and browser behaviour are 2019-era signals. If the answer does not include audio pattern analysis, behavioural signals, and response consistency across the full conversation, the detection is incomplete.

Can you show me a flagged session with the specific timestamped evidence? If the vendor can only show you a score or a verdict, they cannot show you the reasoning. That is not explainable AI. That is a black box with a number on it.

What happens when a candidate uses an AI tool that operates beneath the screen sharing layer? If the vendor's answer involves screen sharing detection, they have not solved the problem that tools like Cluely were designed to circumvent.

How does your system handle the difference between a candidate who paused because they were thinking and a candidate who paused because they were reading an AI-generated answer? Pause detection without context is noise. The meaningful signal is in the pattern across the full conversation, not any single moment.

Where This Goes Next

Experian's forecast predicts that 2026 will be a tipping point. The conversation will move from HR tech circles into boardrooms, legal teams, and regulatory discussions.

The EU AI Act's core requirements for high-risk AI systems in hiring became enforceable in August 2026. The UK's Data (Use and Access) Act 2025 has already reformed automated decision-making rules for recruitment. Saudi Arabia and the UAE have both introduced data protection frameworks governing AI in hiring.

The legal and compliance pressure will force hiring teams to ask harder questions about what their platforms are actually doing, what they are actually detecting, and whether the outputs are defensible. The platforms that will hold up under that scrutiny are not the ones that added a monitoring layer to an unchanged interview architecture. They are the ones that rethought the architecture itself.

The fraud problem in hiring is not a technology gap. It is a design gap. And design gaps cannot be closed by adding sensors to a broken structure.

Related reading:

- How Candidates Cheat in Online Interviews

- Top 15 Anti-Cheat Platforms for Online Interviews

- AI Proctoring vs Human Proctoring

- NeoRecruit vs HireVue

CTA:

If you want to see how adaptive AI interviews and NeoEye detection work on a real role in your organisation, we will run a free pilot with no commitment required.

TABLE OF CONTENTS

Smarter Hiring Starts Here

Get all four pillars working for you. Automate the busywork, elevate your hires.