How Candidates Cheat in Online Interviews (And How to Stop It)

Discover how candidates cheat in online interviews in 2026 - from AI copilots and invisible overlays to deepfakes and proxy interviews - and the proven ways to stop it.

Interview cheating has crossed a threshold in 2026. It is no longer an edge case or a fringe problem that happens to other companies. It is a systemic, growing, and commercially organised threat that is quietly undermining hiring quality across every industry.

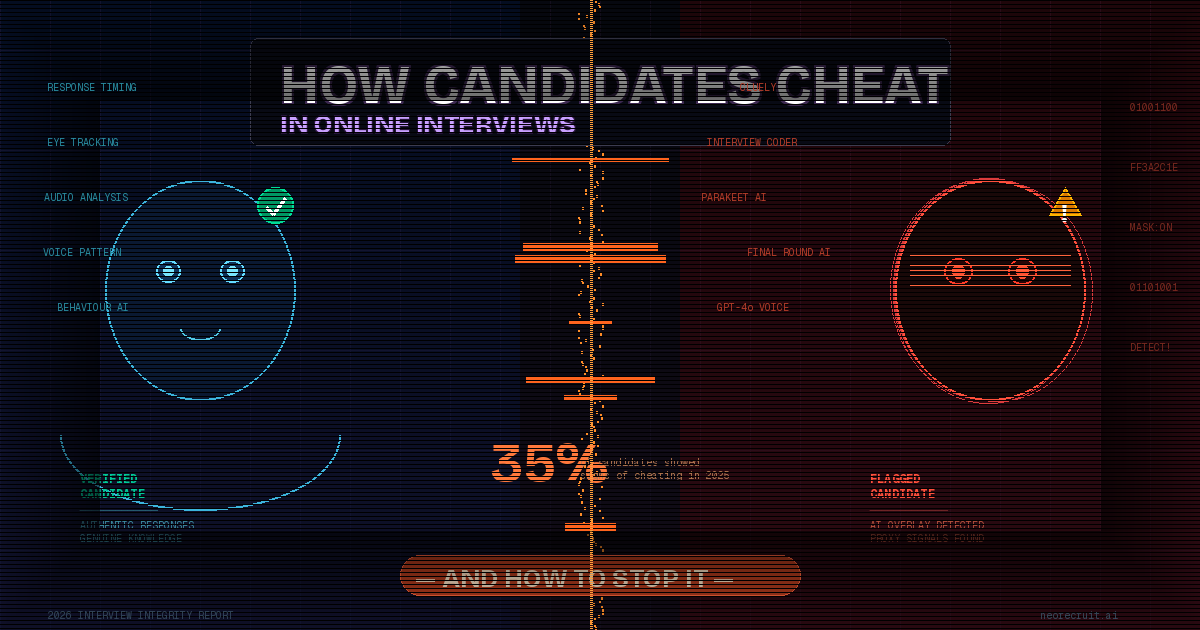

The numbers tell the story. Cheating adoption more than doubled from 15% in June 2025 to 35% by December 2025 - and that trajectory suggests cheating will become the norm rather than the exception by late 2026. 59% of hiring managers suspect candidates of using AI tools to misrepresent themselves during the hiring process, and one in three managers have discovered a candidate was using a fake identity or proxy in an interview.

This is no longer about candidates scribbling notes on their palms. The tools are sophisticated, affordable, and specifically engineered to be invisible to traditional detection methods. Understanding exactly how candidates cheat - and what actually stops it - is now a critical competency for every hiring team.

How Candidates Cheat: The Complete 2026 Playbook

Method 1: AI Invisible Overlays (Cluely, Interview Coder, Final Round AI)

This is the dominant cheating method of 2026 and the hardest to catch with traditional tools.

Tools like Cluely and Interview Coder use low-level graphics hooks to render content that exists only on the candidate's local display. When a candidate shares their screen via Zoom or Google Meet, the video encoding captures the desktop beneath the cheating overlay. The candidate sees a transparent heads-up display floating over their coding environment while the interviewer sees only the code editor.

For behavioural interviews, these tools capture the interviewer's voice through virtual audio drivers. The audio runs through speech-to-text engines, gets transcribed, and feeds into an LLM that generates structured responses within 1-2 seconds. The candidate simply reads the answer aloud while maintaining eye contact with the camera.

Dedicated cheating assistants like Cluely and Interview Coder account for 45% of all cheating cases, followed by voice mode on LLMs like ChatGPT at 34%.

The cheating tools of 2026 use invisible overlays that are undetectable by standard screen sharing and traditional proctoring methods like tab-switching detection and browser lockouts have been rendered completely obsolete.

The human tell: Interviewers have learned to look for eyes wandering to the side, the reflection of other apps visible on candidates' glasses, and answers that sound rehearsed or don't match questions. "I'll hear a pause, then 'Hmm,' and all of a sudden, it's the perfect answer," said one hiring manager.

Method 2: Voice-Based AI Assistants (Parakeet AI)

Voice-based tools take invisible cheating one step further - they require no screen at all.

Tools like Parakeet AI listen to interview questions through the candidate's microphone and speak AI-generated answers aloud in real time through an earpiece. The candidate simply repeats what they hear, completely hands-free. There is no typing, no tab switching, no visible screen activity - leaving almost no digital trail for traditional proctoring to detect.

This method is particularly dangerous in video interviews where screen sharing is not required, such as behavioural and competency interviews. The candidate appears fully engaged, maintains eye contact, and delivers polished answers - all while an AI whispers the script.

Method 3: Secondary Devices and Screen Mirroring

Modern cheating setups often involve secondary monitors, virtual machines, browser extensions, or automation scripts that silently fetch solutions during coding rounds.

A candidate runs the official interview on one device while using a phone, tablet, or second laptop entirely outside the browser's awareness to research answers or paste them via a Bluetooth keyboard. The proctoring software never sees the second device because it exists outside the monitored environment entirely.

Traditional methods like tab switching or using a second screen now make up only 18% of cheating cases - candidates have largely migrated away from these as they are now easily flagged.

Method 4: Proxy Interviews and Impersonation

Perhaps most worrying is how organised and commercialised interview fraud has become. On Telegram, WhatsApp, and Facebook groups, thousands of members are trading tips or offering services. One thriving niche is proxy interview services - entire agencies that will take interviews on a client's behalf.

In these cases, a highly skilled engineer completes all interview rounds while the actual hired employee has a fraction of the assessed capability. Companies discover the fraud weeks or months later when the new hire cannot perform basic tasks.

Method 5: Deepfake Video and Voice

A fraudster uses deepfake software to superimpose a different face onto their live video feed, or to animate a completely synthetic face. Advanced methods also alter head movements and expressions in real time to align with the impostor's voice.

17% of HR managers have directly encountered deepfake technology in a video interview - and the technology is improving rapidly. In some documented cases, the "candidate" on camera was a fully AI-generated avatar being puppeteered by someone else entirely.

Method 6: AI-Generated Take-Home Assessments

Any coding challenge or written assignment completed outside a live, monitored environment is now effectively a test of how well a candidate can use AI assistance. AI-generated responses are unique and rewritten in real time, so traditional plagiarism detection tools do not flag them.

A candidate can submit a perfectly structured, well-reasoned take-home assessment that they had no meaningful role in creating. The output tells you nothing about whether the person can actually perform the work under real conditions.

Why Traditional Proctoring Fails Against These Methods

Most proctoring tools were built for a world where cheating meant looking at a second screen or switching browser tabs. That world is gone.

Modern cheating tools bypass all traditional proctoring signals. Invisible overlays do not trigger tab-switch alerts. Secondary devices exist outside the browser's awareness. The proctoring software sees exactly what the cheating tool wants it to see.

Browser lockdowns cannot stop Parakeet AI speaking into an earpiece. Screen recording cannot capture an overlay that operates at the graphics layer beneath what screen sharing captures. Webcam monitoring cannot detect a second phone sitting off-camera.

The fundamental problem is that traditional proctoring is reactive - it watches for known signals of cheating. Modern cheating tools are specifically engineered to produce none of those signals.

The Behavioural Tells Your Interviewers Should Know

Despite the sophistication of these tools, they are not invisible to a trained eye. While cheating tools may be invisible to screen-sharing software, the behavioural signatures of using them are highly visible to proper analysis.

Watch for these signals:

Consistent response timing regardless of question difficulty: Genuine candidates respond quickly to easy questions and pause longer for complex ones. Cheaters using AI tools show suspiciously consistent timing because the software follows the same processing steps regardless of question difficulty. A candidate who takes exactly 4 seconds to answer both "Tell me your name" and "Explain database sharding" is likely waiting for their AI to generate responses.

Horizontal eye movement patterns: When people recall information, their eyes drift upward or to the side. When reading, eyes move in horizontal lines from left to right. Candidates reading from invisible overlays display this mechanical reading pattern while supposedly thinking through answers.

Overly structured, bullet-pointed spoken answers: Genuine conversational answers are slightly imperfect - people trail off, reconsider, use filler words naturally. AI-generated answers are abnormally structured, delivered as if reading from a listicle. The vocabulary is consistent throughout regardless of the emotional register of the question.

The "Hmm" pause: Interviewers have noticed that many candidates use the "Hmm" sound to buy themselves time while waiting for their AI tools to finish processing. A brief, uniform pause followed by a perfectly structured answer is a reliable signal.

Inability to go deeper: Cheaters can deliver the first layer of an answer perfectly. They cannot go deeper. If a candidate gives a flawless explanation of microservices architecture but cannot answer "walk me through a specific time this caused a problem in your last role," the gap in genuine knowledge becomes apparent.

What Actually Stops Cheating in 2026

1. Adaptive Conversational Interviews

The most effective anti-cheat mechanism is not surveillance - it is interview design. When a candidate provides a perfect textbook answer, an adaptive AI immediately drills down: "Can you tell me about a specific time you applied that in a project and it failed?" LLMs struggle to maintain context when forced to pivot quickly or provide highly specific personal experiences.

This is the core insight: surveillance catches lazy cheaters; adaptive conversation catches sophisticated ones.

NeoRecruit's AI avatar conducts fully adaptive, conversational interviews that probe reasoning in real time - asking follow-up questions based on each answer, digging into the "why" behind responses, and adapting dynamically when answers seem rehearsed or inconsistent. Because the interview is live and conversational, AI copilots cannot keep up. A candidate cannot prompt ChatGPT fast enough to generate a contextually accurate response to an adaptive follow-up question about their specific previous answer.

2. Multimodal Detection

Beyond conversational design, catching sophisticated cheating requires analysing multiple dimensions of the interview simultaneously - not just screen activity, but audio patterns, video behaviour, response timing, and language signals all cross-referenced in real time.

NeoRecruit's proprietary NeoEye is built specifically for this - a multimodal anti-cheat detector that generates a structured risk score with timestamped evidence across all these dimensions simultaneously, giving hiring teams auditable findings rather than a black-box verdict.

3. Trap Questions

Ask candidates to explain a fictional library or framework. A genuine candidate will say they cannot find documentation. A cheating candidate's AI will confidently hallucinate methods and syntax for a technology that does not exist. This is one of the simplest and most effective detection techniques available to any interviewer at no cost.

4. Identity Verification

Require government ID verification before the interview begins. Cross-reference the face on the ID with the face on screen throughout the session. Multi-camera setups - where candidates are asked to show their environment using a second camera - significantly increase the difficulty of proxy and deepfake fraud.

5. Redesign Your Assessment Types

Instead of asking candidates to write fresh code from scratch, give them broken or inefficient code and ask them to debug it. AI can generate solutions, but it struggles with spontaneous reasoning under live pressure. Problem-based assessments that require explaining a thought process in real time - rather than producing a clean output - are structurally harder to fake.

6. Signal Awareness Training for Interviewers

Train your hiring team to recognise the behavioural tells outlined above. The uniform response timing, the horizontal eye movements, the inability to go deeper than the first layer of an answer - these are learnable signals that any interviewer can be trained to spot.

The Real Cost of Getting This Wrong

The cost of hiring a fraudulent candidate exceeds $50,000 in direct losses alone - before accounting for the time wasted in onboarding, the productivity drag on the team, the security risks of a dishonest hire, and the cost of restarting the recruitment process.

The battle for interview integrity is not a problem to be solved once. It is an ongoing adversarial challenge. As cheating tools become more sophisticated, detection must evolve alongside them.

The question for every hiring team in 2026 is no longer just "can this candidate do the job?" It is "is this candidate actually who they claim to be?"

Protect Your Hiring Process with NeoRecruit

NeoRecruit combines adaptive AI interviews that make cheating structurally difficult with NeoEye's multimodal detection that makes it detectable when attempted. Your team reviews candidates based on what they actually know - not what they could look up, whisper through an earpiece, or generate with an invisible overlay.

👉 Book a demo to see how NeoRecruit protects your hiring integrity

TABLE OF CONTENTS

Smarter Hiring Starts Here

Get all four pillars working for you. Automate the busywork, elevate your hires.

.png)